Expectation Management in the Public Sector

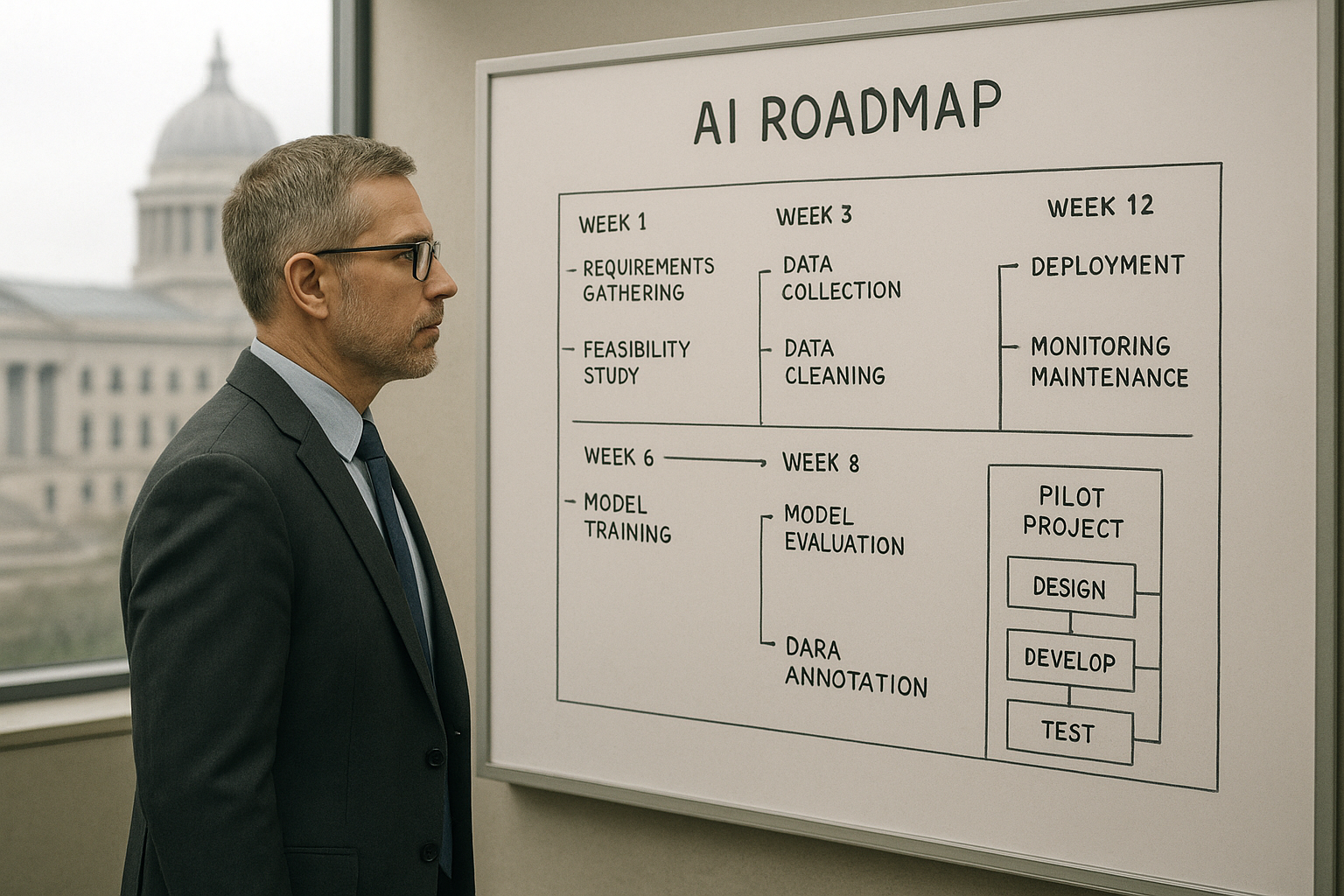

When agency leaders hear about AI today, the headlines promise dramatic leaps in efficiency and instant automation of complex workflows. The reality inside government organizations is different: strict appropriation calendars, records retention obligations, FOIA requests, and auditability requirements all shape what is feasible and how fast. A government AI roadmap that ignores procurement timelines and transparency obligations is a plan for disappointment.

Agency CIOs and program managers should treat narratives about consumer AI as inspiration rather than a blueprint. Consumer-focused LLMs and chat interfaces are optimized for speed and scale in unconstrained environments, not for defensible decision trails or secure handling of personally identifiable information. To manage expectations, set milestones that align with budget cycles and appropriation timelines and require traceability that satisfies oversight offices. When you frame success around demonstrable changes—reduced cycle time for specific services, fewer manual transfers between teams, improved citizen satisfaction—you create achievable targets that respect both fiscal and compliance realities.

Responsible AI government practice means baking auditability and explainability into every step. That involves simple, enforceable rules about logging, content provenance, and records retention so the agency can respond to oversight and public requests without scrambling to reconstruct what an AI system did on a particular date.

Pick the Right First 3 Use Cases

Choosing the right first use cases is the fastest way to build momentum. The three priorities we recommend for agencies starting out are intake triage for citizen requests, document classification and extraction, and internal knowledge search for staff. Each of these delivers visible customer service improvements without exposing high-risk decision-making to immature models.

Intake triage reduces the time a citizen waits to reach the correct team. A lightweight automation layer can route requests, surface missing attachments, and flag urgent matters. Document classification and extraction automate routine data capture from forms and letters, cutting backlog and freeing caseworkers for exceptions. Knowledge search connects staff to policy, prior decisions, and FAQs, which reduces rework and speeds case resolution.

Don’t underestimate equity wins: language support and accessibility features are low-friction improvements that expand access. Prioritize multilingual intake and assistive formats early, and you will show measurable service improvement while meeting statutory access obligations.

Quantify benefits in the terms your stakeholders understand—cycle time in days, percentage backlog reduction, and citizen satisfaction scores. These metrics become proof points that justify further investment in public sector automation and support an agency CIO AI strategy that is rooted in value.

Data Readiness and Responsible AI by Default

One of the reasons projects stall is data immaturity. A pragmatic government AI roadmap begins with a lightweight data inventory and strong data minimization principles. Identify the inputs required for your initial use cases and make conservative decisions about what data needs to leave agency boundaries. For generative systems, redact PII from prompts and ensure that any third-party vendor cannot inadvertently retain sensitive content.

Provenance matters. Implement content watermarking and metadata tagging strategies so that generated communications can be identified and traced back to the system that produced them. Publish model cards and maintain a public FAQ that describes what models do, where they are used, and the limitations stakeholders should expect. Those artifacts support both transparency and the agency’s legal obligations.

Procurement-Smart Pilots

Procurement rules are not a barrier to experimentation if pilots are scoped smartly. Structure contracts with modular scopes: an initial proof phase, followed by options for expansion and a scaling phase. This lets you use competitive procurement vehicles to test concepts without committing an entire appropriation to unproven outcomes.

When working with LLMs or other hosted models, require security and privacy addenda in vendor agreements. Align evaluations with FedRAMP or StateRAMP where possible and include explicit clauses about data handling and breach notification. Include performance SLAs and clear exit criteria in statements of work so the agency can measure ROI and terminate or pivot if a pilot does not meet predefined success metrics.

LLM procurement government teams should insist on vendor commitments to not retain agency data unless explicitly authorized, and to provide technical details about model provenance and training data constraints where feasible. Those requirements keep pilots compliant and defensible.

Change Management and Upskilling for Frontline Staff

Tools alone do not create sustained improvements; people do. Invest in role-based AI literacy so caseworkers, supervisors, and program managers understand both the capabilities and the failure modes of the systems they will use. Teach prompt safety, exception handling, and escalation paths so staff can intervene effectively when automation is uncertain.

Co-design with frontline staff from day one. That reduces resistance and surfaces edge cases before a system is scaled. Practical job aids—cheat sheets, quick decision trees, and in-application guidance—accelerate adoption. Put simple feedback loops in place so users can report incorrect outputs or confusing behavior; measure adoption, rework rates, and error reduction as part of your program dashboard.

How We Help Agencies Deliver Early Wins

For agencies starting an agency CIO AI strategy, focused support makes the difference between stalled pilots and meaningful improvements. A short AI strategy sprint aligned to budget calendars builds a pragmatic government AI roadmap that prioritizes quick wins and compliance. Automation discovery workshops can identify intake and processing opportunities and produce low-code prototypes that show value to stakeholders without lengthy procurement cycles.

Secure AI development and MLOps tailored to government clouds ensure models run where policy requires, and staff enablement programs build the internal capability to operate and govern systems over time. This approach is designed to convert early public sector automation wins into sustainable programs while maintaining the standards of responsible AI government practice.

Agency CIOs who approach AI without the hype and with a concrete plan that aligns procurement, data, and people will find that measurable citizen service improvements are achievable. The path is less about chasing the newest model and more about delivering the right capabilities, responsibly and repeatably, within the constraints that define public service work.

Sign Up For Updates.