The board has asked for an AI agenda. The CFO is calculating capital impact while the CIO is excited about transformer models and automation platforms. Between the two sits a familiar danger: expectations running faster than the organization can responsibly deliver. For mid-market banks and insurers, the lure of dramatic outcomes from flashy vendor demos clashes with a reality of regulatory scrutiny, fragmented data, and legacy cores that make true AI ROI work more iterative than instantaneous.

Why Expectations Run Hotter Than Returns in Financial Services

Hype feeds itself. A viral case study of a large institution reducing call center costs by 40% becomes a boardroom tagline, and vendors amplify it with optimistic timelines. But regulated products—retail banking, commercial lending, and insurance claims—carry high-stakes compliance obligations. KYC/AML verifications, anti-fraud systems, and model risk management lengthen time-to-value because every change needs controls, documentation, and often independent validation. That reality is why conversations about AI ROI financial services frequently stall: the numerator (benefit) is visible in demos, but the denominator (cost, controls, and risk mitigation) is opaque without a cross-functional plan.

Data fragmentation and legacy cores further constrain GenAI efficacy. Models thrive on clean, joined datasets; many mid-market institutions wrestle with siloed ledgers and inconsistent document standards. Add the risk officer’s caution—insisting on explainability and audit trails—and you have a natural tension between speed and safety. Managing AI expectations begins with acknowledging these limits openly rather than treating them as late-stage surprises.

A CFO–CIO Value Thesis: Define, Bound, and Measure ROI

A practical CFO–CIO AI partnership starts with a shared value thesis: a focused hypothesis that links specific GenAI or automation use cases to P&L drivers and capital planning. Instead of abstract claims about machine learning, translate potential outcomes into reductions in cost-to-serve, improvements to loss ratio, lower fraud losses, or higher NPS and retention. When the business goal is clear—say, lowering average handle time or reducing claims leakage—the finance team can construct credible ROI models that incorporate operating expenses, projected adoption curves, and risk mitigation costs.

Differentiate leading indicators from lagging outcomes. Leading indicators—handle-time, first-contact resolution, triage accuracy—give early signals during pilots. Lagging measures like realized cost savings, claim recoveries, or updated loss ratios validate the sustained impact. Build confidence intervals into forecasts and run sensitivity analysis across adoption rates, error rates, and regulatory changes. A disciplined CFO–CIO AI strategy uses those bands to decide how much capital to commit and when to accelerate or pause.

90-Day Pilot Portfolio With Stage Gates

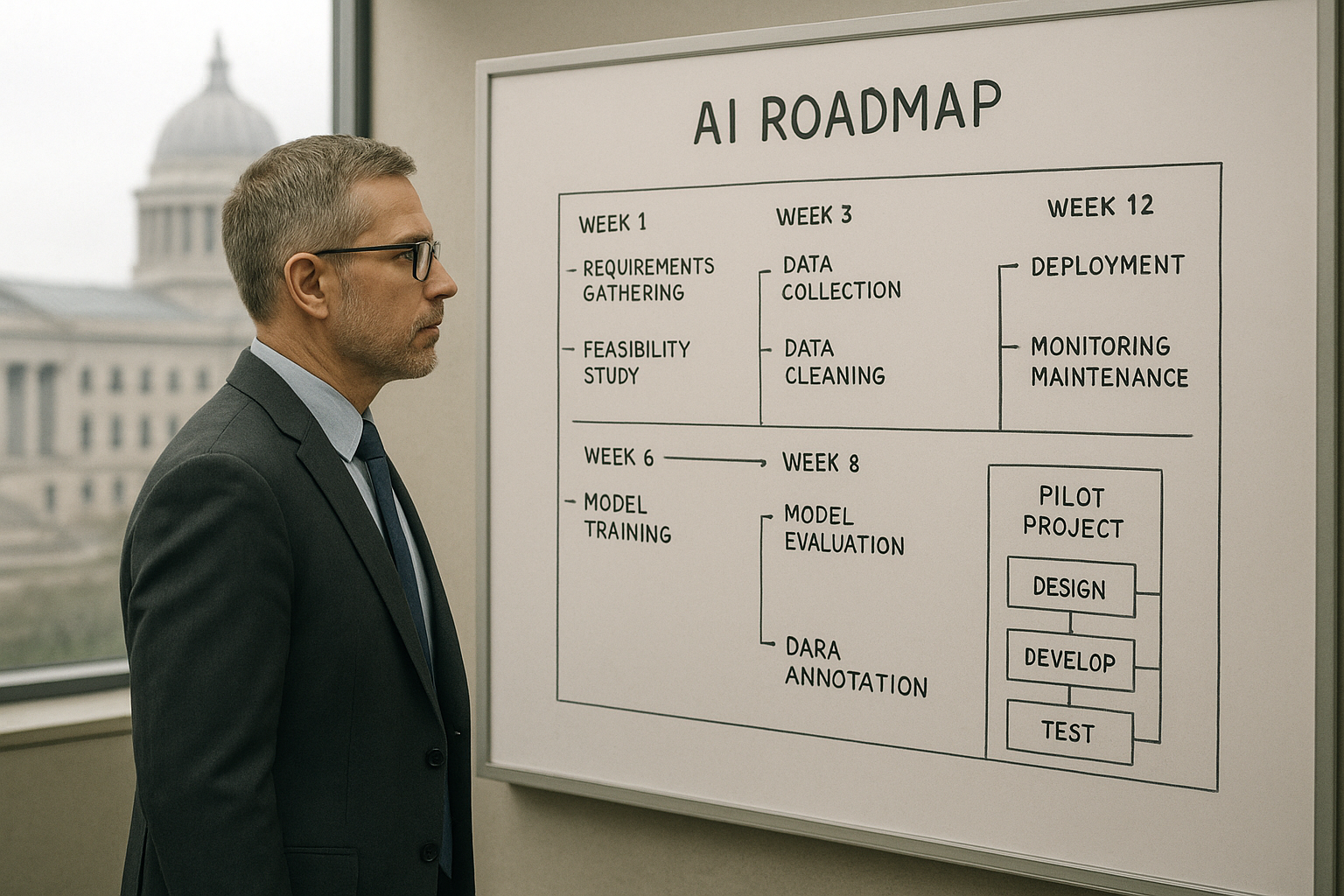

Large moonshots look attractive on slides but fail often when they collide with reality. A more resilient path is a 90-day pilot portfolio: run three to five targeted pilots that are low-risk and regulation-friendly, each designed with explicit go/kill/scale criteria. Candidates for this portfolio include call summarization to reduce agent time, intelligent claims triage to speed disposition, and automated extraction of KYC documents to compress onboarding timelines while preserving audit trails.

Each pilot needs stage gates aligned to measurable checkpoints: initial data readiness and privacy assessments, human-in-the-loop validation thresholds, and a compliance sign-off before any model touches production data. Financial guardrails are essential: cap compute spend per pilot, isolate experiments in sandboxes, and require red-teaming and adversarial testing before scaling. These constraints protect capital while still creating a rapid learning loop. The CFO can monitor cost telemetry against expected uplift, and the CIO can ensure technical debt is not growing unchecked.

Practical AI Governance for CFOs and CIOs

Governance doesn’t have to be a heavyweight bureaucracy to be effective. Start with a pragmatic set of controls that reduce reputational and regulatory risk without stalling momentum. Maintain a model inventory with lineage and versioning so auditors can trace outputs back to inputs. Establish policies for PII handling, document retention, and prompt-injection defenses for any LLM interfaces. For models that influence credit, pricing, or claims, capture explainability artifacts and the assumptions used in any scoring.

Financial controls are equally critical. Treat AI spend as a blend of OPEX and CAPEX—define approval thresholds for cloud consumption and third-party model licensing, and instrument cost telemetry so finance can see spend by pilot and by use case. Third-party risk reviews for LLM providers must be part of vendor selection: understand hosting models, data residency, and the provider’s incident-response commitments.

Operating Model and Skills: Finance + IT as Co‑Owners

To move from pilots to enduring capability, define decision rights and a shared operating model where finance and IT are co-owners. Stand up a joint steering committee with clear RACI definitions covering risk, architecture, product ownership, and procurement. Make the CFO accountable for ROI thresholds and capital budgeting; make the CIO accountable for technical hygiene and delivery pacing.

Skills and literacy matter. Equip finance teams with targeted AI fluency—how to prompt models responsibly, how to validate outputs, and how to review for bias. IT teams need runbooks for exception handling and model performance drift, and escalation paths that include risk and compliance. These simple, practical steps reduce the chance that a promising pilot collapses into a regulatory incident or an uncontrolled cost center.

How We Help: Strategy, Automation Discovery, and Build‑Operate‑Transfer

We work with mid-market financial services firms to turn these principles into executable plans. Our executive AI strategy workshops help CFOs and CIOs align on a shared value thesis and build robust AI ROI financial services models that account for compliance and capital constraints. During process automation discovery, we map claims, underwriting, and servicing workflows to identify where AI process automation banking can safely improve throughput and reduce manual work without increasing risk.

For delivery, we favor a compliance-by-design approach: rapid AI development paired with MLOps and governance artifacts so models are auditable from day one. When appropriate, we operate initial live capabilities under a build-operate-transfer model so internal teams can absorb knowledge, controls, and tooling before taking full ownership. That approach balances speed with institutionalization—getting benefits to the P&L while ensuring sustainable control.

Boards will continue to pressure management for AI initiatives, and vendors will continue to sell transformation. The productive response is a CFO–CIO partnership baked around a measurable, bounded agenda: a portfolio of low-risk pilots, clear financial and compliance guardrails, and an operating model that shares ownership. That is how realistic expectations become measurable outcomes—and how GenAI moves from a boardroom promise to an accountable line item on the balance sheet.