2025 felt like the year the promise of enterprise AI stopped being theoretical and started leaving practical footprints in hospitals and retail operations. The breakthroughs that dominated headlines — clinical LLMs tuned to medical knowledge, high-accuracy imaging models, and ambient scribing that can capture consults — are real technologies with immediate operational implications. But translating breakthroughs into dependable, compliant, and revenue-driving systems requires disciplined roadmaps. This post translates the most consequential advances of 2025 into two pragmatic playbooks: one for hospital leaders beginning their AI journey, and one for retail teams scaling personalization and intelligent inventory.

What 2025’s AI Breakthroughs Mean for Hospitals — A Starter Blueprint for CIOs (Starting Out)

Hospital CIOs and IT directors who are starting out need a clear sense of what’s real versus what’s marketing. In 2025, clinical LLMs emerged that can summarize literature, suggest evidence-based differentials, and draft notes; imaging models achieved levels of sensitivity and specificity for select read types; and ambient scribing systems moved from experimental to operational in several large health systems. These advances are meaningful, but they are not plug-and-play replacements for clinical judgment.

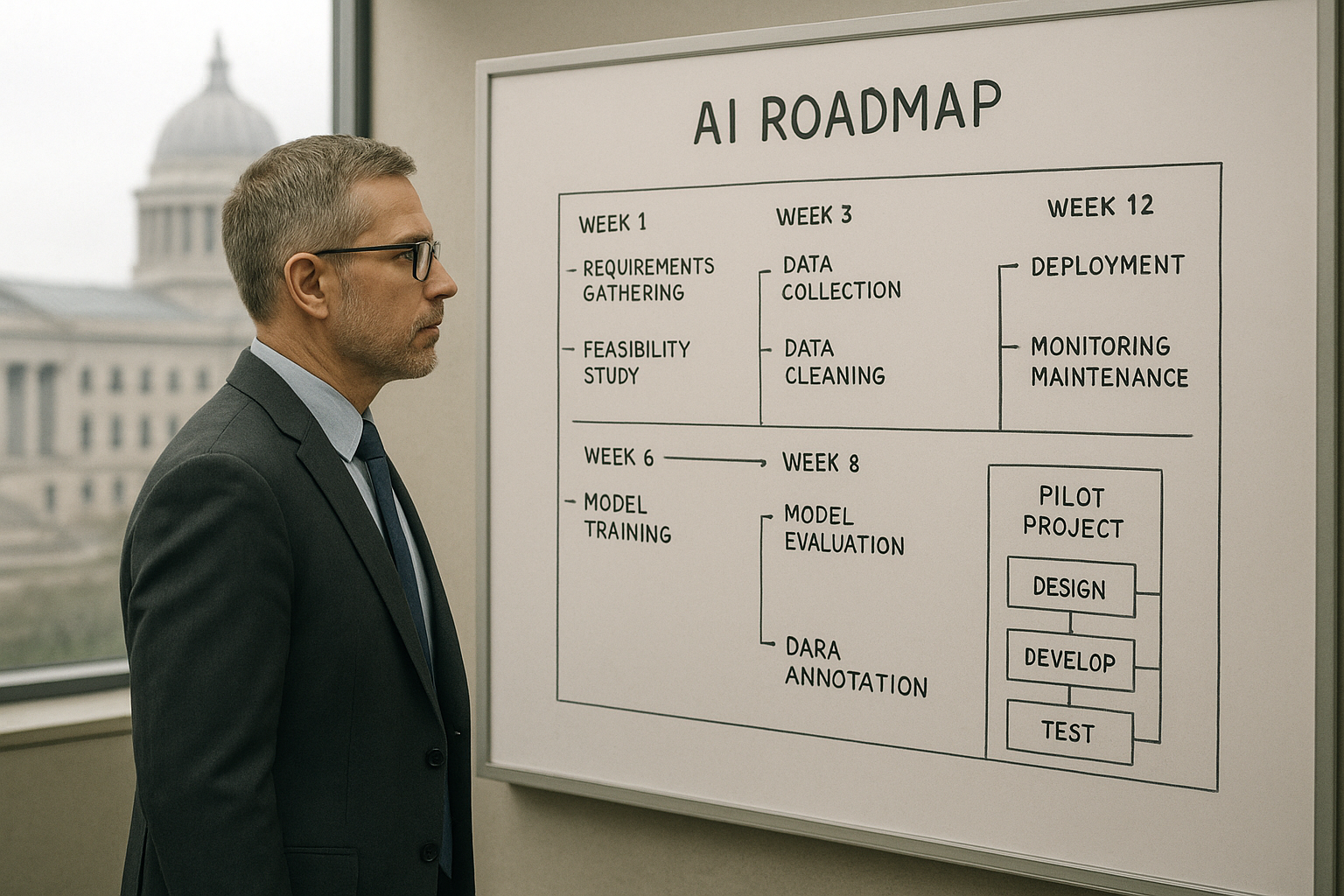

Begin by prioritizing starter use cases that balance impact with risk. Ambient scribing can return clinician time by reducing documentation burden, but it must be paired with human review and clear auditing. Prior-authorizations and revenue-cycle automation are low-friction automation targets: AI that extracts structured data from notes and populates authorization forms can reduce denials and cycle time. Patient access chatbots and virtual front doors can smooth scheduling and triage, improving throughput when tied into EHR workflows. Coding support that suggests billing codes can accelerate billing, but require threshold checks and coder sign-off.

Data privacy and PHI handling are non-negotiable. HIPAA-compliant AI implementations typically start with strict de-identification rules, access controls, and data locality guarantees. Decide early on an on-prem versus private cloud strategy: on-prem solutions give maximum control over PHI, while private cloud vendors can offer compliant enclaves and robust managed services. For many hospitals just starting out, a hybrid model—keeping raw clinical data on-prem while consuming vendor models in a private cloud via vetted APIs—provides a pragmatic compromise.

Evaluation protocols must be rigorous and clinically meaningful. Build accuracy and safety tests aligned to clinical endpoints, not just predictive metrics. Include bias audits across demographics, track false-negatives in safety-critical pathways, and require human-in-the-loop sign-off criteria. A staged evaluation framework — retrospective validation, silent prospective monitoring, then supervised clinical pilot — helps manage risk while demonstrating value.

Pilot-to-scale also needs an EHR integration pattern and clinical governance. Lightweight integrations using FHIR for data exchange and SMART on FHIR for context-aware apps make initial pilots less invasive. Define clinical governance up front: who approves model updates, what incident response looks like when an AI suggestion is wrong, and how to log and audit decisions for patient safety and regulatory review.

ROI levers in hospitals are tangible: saved clinician time, reduced prior-authorization denials, faster throughput in ambulatory clinics, and fewer coding errors. Quantify baseline workflows so you can measure delta after deployment. Finally, choose partners who have proven clinical deployments and provide clear pathways for AI development integration when gaps remain. Vendors can deliver rapid time-to-value, but a thin center of excellence capable of custom development ensures you can integrate AI into local workflows and governance practices.

Retail After 2025 — Scaling AI for Hyper-Personalization and Inventory Agility (Scaling)

Retail teams scaling AI in 2025 must turn lessons into a unified execution model. The year delivered significant improvements in generative models for content creation, real-time demand-sensing models, and cross-system interoperability. For CMOs and CTOs, the first imperative is a unified data layer: a customer data platform (CDP) tightly integrated with product catalogs and event streams. Identity resolution across channels underpins both retail AI personalization and accurate measurement.

Generative AI transformed content operations; teams can generate creative variants at scale, but guardrails are essential. Brand safety controls, human review workflows, and style-guides embedded as model prompts stop plausible but off-brand content. Use GenAI to create personalization variants and then subject them to rapid A/B testing within an experimentation framework so creative outputs are evaluated for engagement and conversion rather than assumed effective.

Next-best-action engines that combine propensity models with business rules are the workhorses of modern personalization. These engines should be built with an experimentation mindset: serve recommendations, measure incremental lift, and iterate. Integrate such engines with adtech and martech through standardized APIs and leverage measurement in clean rooms when sharing data with partners. Clean rooms and privacy-preserving analytics are central to a sound first-party data strategy, enabling measurement without exposing raw PII.

Inventory intelligence moved from batch forecasting to continuous demand-sensing in 2025. Models that ingest point-of-sale, web signals, weather, and local events can update store and SKU allocations in near real-time. Pair demand forecasts with optimization engines for markdowns and fulfillment allocation to reduce stockouts and margin erosion. The tangible metrics here are reduced stockouts, improved sell-through, and less margin lost to emergency sourcing.

MarTech and AdTech interoperability is not just technical—it’s an operating model. Ensure your CDP, experimentation platform, and recommendation engine share schemas and unified identifiers so you can measure CAC versus LTV by cohort. Measurement should be tied to incremental lift tests, with clear KPIs for margin impact, stockouts avoided, and campaign efficiency. When a personalization experiment increases conversion but drives costly fulfillment, the net impact can be negative; measure holistically.

Finally, the operating model matters as much as the models. A cross-functional growth squad—combining data engineering, product managers, creative ops, and MLOps—keeps experiments fast and accountable. MLOps cadence should include model retraining windows, post-deployment monitoring for data drift, and a rollback plan for performance regressions. Put human review gates on creative AI outputs and inventory decisions that materially change customer experiences.

Across both sectors, 2025’s lesson is pragmatic: powerful models expand what’s possible, but dependable value comes from disciplined integration, governance, and measurement. Hospital leaders should focus on HIPAA-compliant AI pilots that free clinician time and reduce denials; retail leaders should stitch together a first-party data strategy, GenAI content ops, and intelligent inventory systems that optimize both customer relevance and margin. The next phase won’t be about whether AI works — it will be about whether organizations have the processes, governance, and integrations in place to make it consistently work where it matters.